宝塔面板用nginx和Robots如何屏蔽垃圾搜索引擎蜘蛛,减少服务器负荷!

- 网建应用

- 2024-08-26

- 96热度

- 0评论

我们常见的搜索引擎有百度、谷歌、搜狗、360等搜索引擎外,还存在很多搜索引擎,通常这些搜索引擎不会给你网站带来流量,反而会导致大量抓取,导致网站服务器资源占用,造成网站主机CPU和带宽资源浪费,想要解决这个问题,请按照下面方法操作。

方式一:通过宝塔面板修改nginx指定UA然后屏蔽

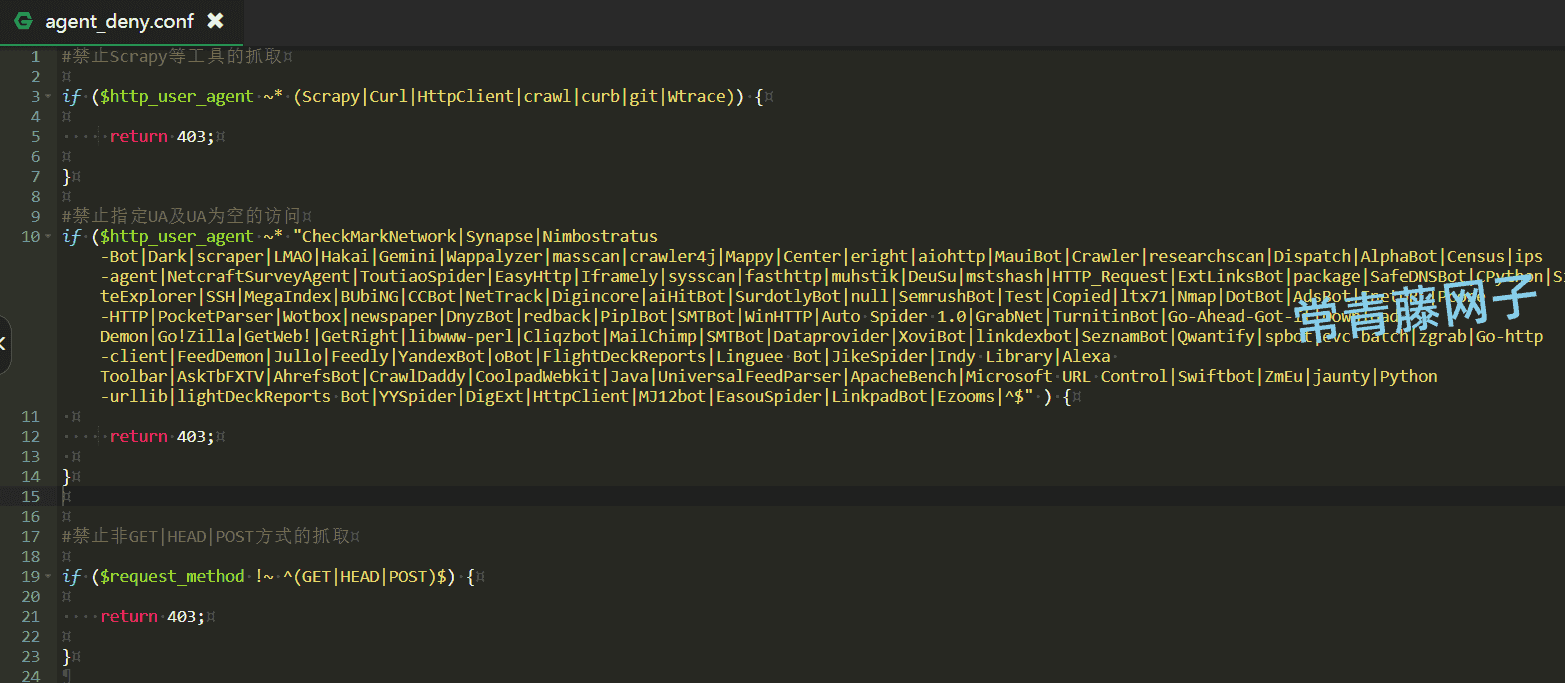

1、找到文件目录/www/server/nginx/conf文件夹下面,新建一个文件命名:agent_deny.conf 你也可以随意起名,创建完文件后,点击编辑这个文件,把下面的代码放进去保存。

#禁止Scrapy等工具的抓取

if ($http_user_agent ~* (Scrapy|HttpClient|crawl|curb|git|Wtrace)) {

return 403;

}

#禁止指定UA及UA为空的访问

if ($http_user_agent ~* "CheckMarkNetwork|Synapse|Nimbostratus-Bot|Dark|scraper|LMAO|Hakai|Gemini|Wappalyzer|masscan|crawler4j|Mappy|Center|eright|aiohttp|MauiBot|Crawler|researchscan|Dispatch|AlphaBot|Census|ips-agent|NetcraftSurveyAgent|ToutiaoSpider|EasyHttp|Iframely|sysscan|fasthttp|muhstik|DeuSu|mstshash|HTTP_Request|ExtLinksBot|package|SafeDNSBot|CPython|SiteExplorer|SSH|MegaIndex|BUbiNG|CCBot|NetTrack|Digincore|aiHitBot|SurdotlyBot|null|SemrushBot|Test|Copied|ltx71|Nmap|DotBot|AdsBot|InetURL|Pcore-HTTP|PocketParser|Wotbox|newspaper|DnyzBot|redback|PiplBot|SMTBot|WinHTTP|Auto Spider 1.0|GrabNet|TurnitinBot|Go-Ahead-Got-It|Download Demon|Go!Zilla|GetWeb!|GetRight|libwww-perl|Cliqzbot|MailChimp|SMTBot|Dataprovider|XoviBot|linkdexbot|SeznamBot|Qwantify|spbot|evc-batch|zgrab|Go-http-client|FeedDemon|Jullo|Feedly|YandexBot|oBot|FlightDeckReports|Linguee Bot|JikeSpider|Indy Library|Alexa Toolbar|AskTbFXTV|AhrefsBot|CrawlDaddy|CoolpadWebkit|Java|UniversalFeedParser|ApacheBench|Microsoft URL Control|Swiftbot|ZmEu|jaunty|Python-urllib|lightDeckReports Bot|YYSpider|DigExt|HttpClient|MJ12bot|EasouSpider|LinkpadBot|Ezooms|^$" ) {

return 403;

}

#禁止非GET|HEAD|POST方式的抓取

if ($request_method !~ ^(GET|HEAD|POST)$) {

return 403;

}如图:

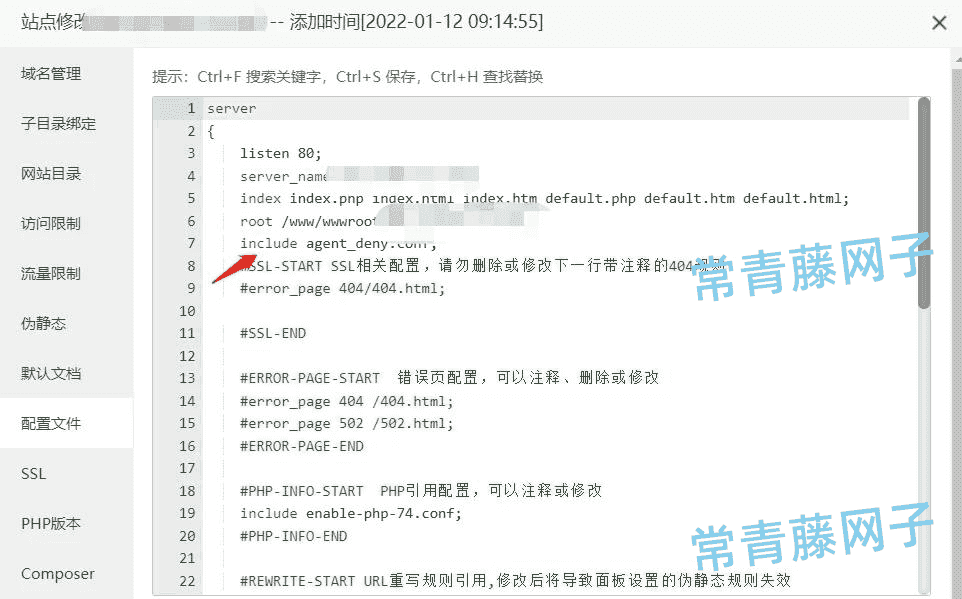

2、找到【网站】-【设置】点击左侧 【配置文件】选项卡,在第7-8行左右 插入代码: include agent_deny.conf;

添加完毕后保存,重启nginx即可,这样这些蜘蛛或工具扫描网站的时候就会提示403禁止访问。

注意:如果你网站使用火车头采集发布,使用以上代码会返回403错误,发布不了的。如果想使用火车头采集发布,请使用下面的代码:

#禁止Scrapy等工具的抓取

if ($http_user_agent ~* (Scrapy|HttpClient|crawl|curb|git|Wtrace)) {

return 403;

}

#禁止指定UA及UA为空的访问

if ($http_user_agent ~* "CheckMarkNetwork|Synapse|Nimbostratus-Bot|Dark|scraper|LMAO|Hakai|Gemini|Wappalyzer|masscan|crawler4j|Mappy|Center|eright|aiohttp|MauiBot|Crawler|researchscan|Dispatch|AlphaBot|Census|ips-agent|NetcraftSurveyAgent|ToutiaoSpider|EasyHttp|Iframely|sysscan|fasthttp|muhstik|DeuSu|mstshash|HTTP_Request|ExtLinksBot|package|SafeDNSBot|CPython|SiteExplorer|SSH|MegaIndex|BUbiNG|CCBot|NetTrack|Digincore|aiHitBot|SurdotlyBot|null|SemrushBot|Test|Copied|ltx71|Nmap|DotBot|AdsBot|InetURL|Pcore-HTTP|PocketParser|Wotbox|newspaper|DnyzBot|redback|PiplBot|SMTBot|WinHTTP|Auto Spider 1.0|GrabNet|TurnitinBot|Go-Ahead-Got-It|Download Demon|Go!Zilla|GetWeb!|GetRight|libwww-perl|Cliqzbot|MailChimp|SMTBot|Dataprovider|XoviBot|linkdexbot|SeznamBot|Qwantify|spbot|evc-batch|zgrab|Go-http-client|FeedDemon|Jullo|Feedly|YandexBot|oBot|FlightDeckReports|Linguee Bot|JikeSpider|Indy Library|Alexa Toolbar|AskTbFXTV|AhrefsBot|CrawlDaddy|CoolpadWebkit|Java|UniversalFeedParser|ApacheBench|Microsoft URL Control|Swiftbot|ZmEu|jaunty|Python-urllib|lightDeckReports Bot|YYSpider|DigExt|HttpClient|MJ12bot|EasouSpider|LinkpadBot|Ezooms" ) {

return 403;

}

#禁止非GET|HEAD|POST方式的抓取

if ($request_method !~ ^(GET|HEAD|POST)$) {

return 403;

}设置完了可以用模拟爬去来看看有没有误伤了好蜘蛛,说明:以上屏蔽的蜘蛛名不包括以下常见的6大蜘蛛名:

百度蜘蛛:Baiduspider

谷歌蜘蛛:Googlebot

必应蜘蛛:bingbot

搜狗蜘蛛:Sogou web spider

360蜘蛛:360Spider

神马蜘蛛:YisouSpider

爬虫常见的User-Agent如下:

FeedDemon 内容采集

BOT/0.1 (BOT for JCE) sql注入

CrawlDaddy sql注入

Java 内容采集

Jullo 内容采集

Feedly 内容采集

UniversalFeedParser 内容采集

ApacheBench cc攻击器

Swiftbot 无用爬虫

YandexBot 无用爬虫

AhrefsBot 无用爬虫

jikeSpider 无用爬虫

MJ12bot 无用爬虫

ZmEu phpmyadmin 漏洞扫描

WinHttp 采集cc攻击

EasouSpider 无用爬虫

HttpClient tcp攻击

Microsoft URL Control 扫描

YYSpider 无用爬虫

jaunty wordpress爆破扫描器

oBot 无用爬虫

Python-urllib 内容采集

Indy Library 扫描

FlightDeckReports Bot 无用爬虫

Linguee Bot 无用爬虫

方式二:通过网站Robots.txt来屏蔽

User-agent: AhrefsBot

Disallow: /

User-agent: DotBot

Disallow: /

User-agent: SemrushBot

Disallow: /

User-agent: Uptimebot

Disallow: /

User-agent: MJ12bot

Disallow: /

User-agent: MegaIndex.ru

Disallow: /

User-agent: ZoominfoBot

Disallow: /

User-agent: Mail.Ru

Disallow: /

User-agent: SeznamBot

Disallow: /

User-agent: BLEXBot

Disallow: /

User-agent: ExtLinksBot

Disallow: /

User-agent: aiHitBot

Disallow: /

User-agent: Researchscan

Disallow: /

User-agent: DnyzBot

Disallow: /

User-agent: spbot

Disallow: /

User-agent: YandexBot

Disallow: /